Do you know the difference between an AI-assisted paper and an AI-generated paper (AIGP)?

They sound similar, but they describe two very different ways of exploring or assisting knowledge production.

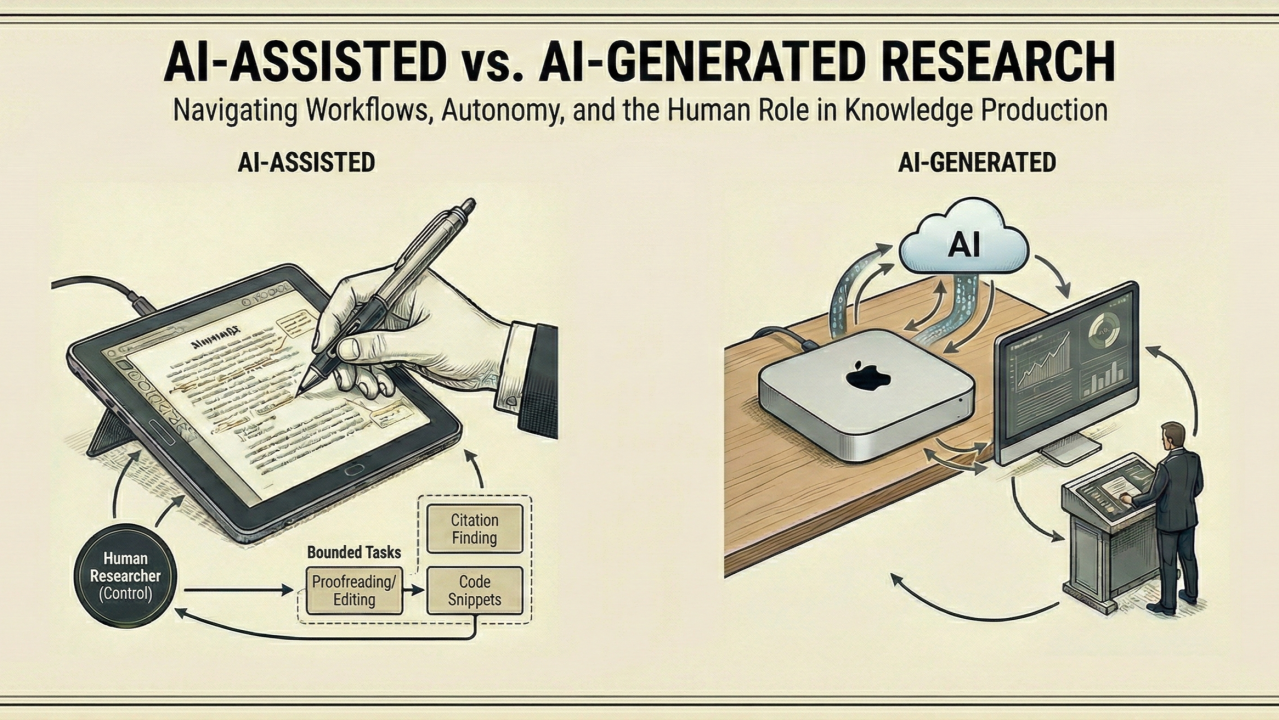

AI-assisted papers are the more familiar case. If you’ve only used web-based AIs, such as ChatGPT or Gemini, this is what you imagine when thinking of the use of AI in research. In an AI-assisted paper a human researcher remains firmly in control and uses tools such as ChatGPT or Gemini for bounded tasks: proofreading, editing, finding potential papers to cite, criticizing their manuscript to improve clarity, or generating small code snippets. The workflow is human-driven and the AI functions as an advanced instrument, closer to a spellchecker, a statistical package, or a search engine.

AI-generated papers, or AIGPs, involve systems with a higher degree of operational autonomy. They do not involve web-based chats, but AIs running on a computer terminal, involving an iterative loop where an AI agent plans and executes substantial portions of the research process. The agents get to download and install software, write code, perform analyses, generate figures, and compile manuscripts with LaTeX. The human here acts as an advisor, providing prompts, constraints, and feedback, in a relationship that is similar to that between an advisor and a graduate student, where the advisor critiques and guides the student’s work based on the output they have produced.

Distinguishing these categories matters because they correspond to different workflows, risks, and evaluation challenges. They are also technologies at different levels of maturity. AI-assisted research builds on a relatively mature capability. AI chat interfaces are great proofreaders and have become increasingly reliable at bounded cognitive tasks (when using thinking mode in a paid app of course*).

AIGPs, by contrast, are a more recent and more experimental technology. Yet this is precisely why the space is intellectually interesting. While the average quality may still be low, the potential for dramatically higher research throughput is real, making this a moment to explore and set norms.

Humans remain central in both paradigms but play different roles. In AI-assisted work, they are clearly in the driver’s seat. It is thus not surprising that a researcher who regularly produces good work can produce good AI-assisted work. In AIGPs, researchers act as supervisors, shaping trajectories and evaluating outputs. Whether AIGPs can become good is yet to be seen, although there are some interesting cases like that of Kosmos, an AI scientist developed at Edison Scientific.

In both cases, a useful analogy may be that of a paintbrush. Like a brush, AI is a tool that is basically available to everyone. But access to a brush does not make everyone a Michelangelo. Tools can make differences in skill and vision even more visible. AI in research is likely to function the same way. Scholars who are good at debugging from output and giving feedback to graduate students might turn out to be better at producing AIGPs.

Keeping the distinction between AI-assisted and AI-generated papers clear, and providing different venues for AI-assisted, AI-generated, and purely human papers will help journals, institutions, and scholars develop norms that match our evolving technological reality.

Who knows how research will be produced in 10 or 20 years? My intuition is that when it comes to AIGPs, today we are at the same stage of that 2023 video of Will Smith eating pasta.

Hold on to your seats and stay curious.

*I added this comment because I met many colleagues who have a poor opinion of what AI tools can do but have never tried a paid version (which are incredibly superior to the free versions in terms of quality).